Week 42 - An overabundance of toys

42 - the answer to life, the universe, and everything. It also comes after 41.

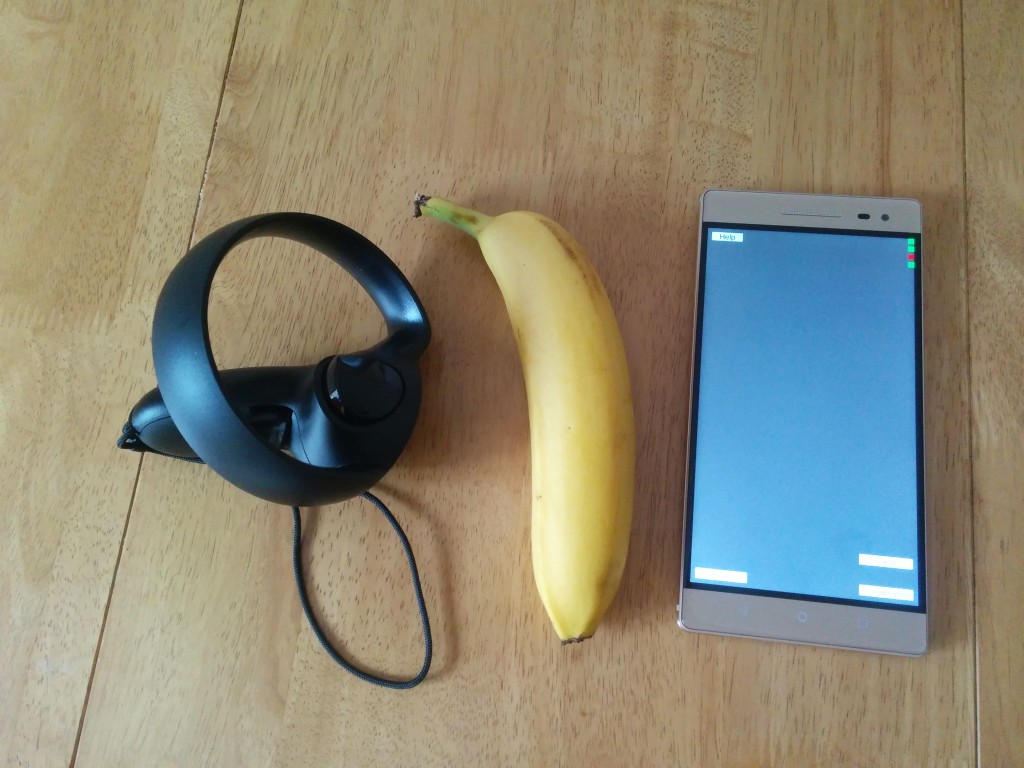

This week I’ve been releasing an Alpha candidate of Scanner, playing with Oculus Touch and as of this morning, unboxing my Phab 2 Pro.

Shipping

Ideas are not worthless. I’d peg them somewhere between a penny and a pound. Doing something with the idea (commonly called “execution” which always struck me as a bit violent) tends to be worth more - but the processes of execution are a long, windy road. Or possibly, a maze of twisty passages, all alike. You’ve got your development, your build process, your release process, your feedback process, your marketing strategy, your pricing strategy, branding … and on, and on.

The end result of building, releasing and providing feedback channels means you can ship something to a customer. If you’re lucky, you might even make some money out of that. In my case, unlikely (for reasons I’ll come to shortly) but it’s at least possible.

Last week, a throwaway comment from someone I spoke to at the VR conference kicked me up the arse and forced the realisation that I should be shipping - even if I get nothing back in revenue, it’s going to be valuable to crank the handle and exercise the product muscles that have atrophied over the course of the year. At BA, we shipped constantly - with automated build processes, a whole team dedicated to Shipping and Release (shoutouts to Big Rion) and customer expectations of new content every week or so, we had it refined to an art.

Of course, I’ve forgotten most of that art, so it’s been fun re-learning it.

Shipping on Android Play Store

Getting an APK up onto the Play Store is actually surprisingly easy. You build your APK, you make sure your manifest settings and build versions are correct, you set up the app details on the store front (including icons, banner, info text, feedback channels and the like) and you hit “Publish”.

Except, not so fast sucker! This thing is super-broken, and has a crappy UX to boot - so at the very least, it could do with a bit of testing before it goes out to the general public. And recently, Google has put a load of effort into making this a much easier task. You can set up closed and open Alpha and Beta tests, and expose only a select few users (or groups of users, using G+ and Google Groups) to the app before you ship. You can also generate Promo codes, so I can prop it as a paid app (you can switch from paid to free, but not the other way around) without testers having to fork out any moola to participate, which is awesome.

Scanner is now in closed Alpha, which means about 5 people in the world have downloaded it. Nice! So far, I’ve fixed about 20 issues thanks to their feedback (and the app is now almost running on the Phab 2 Pro, which I didn’t personally own until this morning). Being unable to test locally on a specific device is incredibly frustrating - every conversation starts with “did it work”, and when the answer is no, you’re stuck trying to divine the reason, like a crazed wizard with a bowl of entrails and bones. I’m lucky that the testers are all developers themselves, so they understand things like ADB Logcat/shell, where to browse for log files, and how to send me screenshots. Still, using the Alpha channel on the Play Store, the turnaround between builds is at least 5 minutes, and potentially a few hours. Add on to that, that most of my testers are in the US (and are not awake until my lunchtime, being most active as I’m doing the evening routine with the boys) has lead to very slow progress fixing the core issues.

The very act of putting something out to a wider audience (even if that’s one other person) is hugely valuable. About 10 seconds after putting my first APK up on to the store, I began thinking of all the things I should have fixed before I put it up - turning off testing code, writing a log file to device for later recovery, disabling the multiplayer network code. I’ve spent a couple of days this week simply fixing stuff I should have fixed ages ago, but never got round to, because I could live with it in a crappy state. Real users don’t like crappy. My test buddies may allow some wrinkles to see if they get some kind of result from the app, but anyone else is just going to hit “one star” and uninstall if it doesn’t do what they expect first time.

As of this morning, I’m at version 1.0.0.7 (and due to rise in a few hours). my Big Ol Todo List is growing faster than I can handle, and it’s all good stuff.

Oculus Touch

So here I am, getting stuck into shipping something - and there’s a ding at the doorbell. Probably more things for my missus, I think - she’s a bit of an EBay addict. But it’s not! It’s my Oculus Touch controllers!

Of course, that means I have to down tools (tools being my fingers) and give them a whirl. And, as I’ve got my second PC set up, that’s a good opportunity to get the other desk in my garage prepared as a VR machine. A bit of cleanup later, and I’ve got a second “room scale” area prepared, both of the oculus cameras set up, and I’m into the touch introduction demo - which is really nice. At first, it reminded me of the Valve Lab demos (there’s a robot, and there’s things to throw around) but it’s actually really nicely written. Very impressive as a first introduction to the tech.

The touch controllers are excellent. It takes a little time to get used to grip, point, etc but the devices are beautifully balanced, light, and have buttons and sticks in all the right places. With how I’ve set up my area, I get about 200 degrees of tracking freedom in a 1.5m by 1.5m area, which certainly isn’t anything like as free roaming as the Vive area, but it’s enough to play some games and mess around with Medium.

Oculus Medium

Medium is Oculus’s take on creating artistic content using the Touch controllers (think something a bit like Tiltbrush, and something a bit like ZBrush, mixed together). You can sculpt, squish and paint onto the surface of your creations. The tutorial is janky, but once you get a feel for it, it’s pretty damned awesome.

I would show you some of my creations, but unfortunately the output of the export process from medium is an enormous OBJ file - about 10 minutes of noodling created a 130MB OBJ made of over 1MM verts and triangles. Even Sketchfab (my favourite 3D model host) barfs on content that large.

I spent a few hours trying to get models pulled into Blender so I could downrez them, but that process is full of annoyance and pain - Blender (and Unity) don’t support per-vert colour in the OBJ file, the decimate process produces horribly fractured meshes whenever I use it, etc etc. The end result of my Blender machinations was a horribly broken mesh.

I’m sure this stuff will get more solid as time goes on - and the Medium tool itself is great.

Phab 2 Pro

Which brings me to this morning’s toy. The first consumer Tango device, finally made it into the UK Lenovo store yesterday (yes, I’ve been checking daily for a month now). “Shipping in 1-2 Weeks”, says the box. Ah well, it’ll be here before Christmas, hopefully, thinks I. And 17 hours later, it arrives! That’s the kind of estimate I really like.

I’m pretty confident that this device won’t provide a huge install base to sell apps into - the content just really isn’t there yet (a big motivator for me to ship Scanner) and it’s a bit of a strange beast, all round. it’s enormous - it’s nearly twice the size of my previous phone area-wise. I’m really not sure if it’ll fit into a pocket. The hardware is decent, it’s running an older version of Android than I’d like (and probably won’t receive many updates, if any), which will undoubtedly be a problem further down the line. If I want to continue developing for Tango, though, I need to have one - the Tango devkits are slipping further and further into unusability as time goes on (with one example being that google services now crash, repeatedly, every 10 seconds or so, bringing popups on screen that stop whatever you’re working on).

I’m expecting the Tango system itself to generate functionally identical results to the Yellowstone devkit, hopefully I’ll have more details on that over the next few weeks. Ideally, the camera image will be improved, and then I can look at getting somewhat usable results back from the scans that Scanner makes.

This may not be the most in depth review of the device you’ve ever seen, but as I’ve only had it 90 minutes, you’ll just have to wait a bit longer for the skinny.

When I first started on this journey, I assumed that there would be some decent market penetration of a Tango enabled device by this point. I mean, who wouldn’t want this hardware on their phone? Like all things, the intersection of my dreams and reality is a messy place. Given the strategy machinations of Google, Lenovo, Motorola etc, and the (to my mind) super-wierd rollout of the Phab, I’m going to be very surprised if this thing tops 100K units (honestly, I’ll be surprised if it tops 10k).

This is terrible in the short term - it will undoubtedly validate a load of naysayers all round who think Tango is a bad product strategy / expensive gamble (and I’m 100% sure there’s plenty of those types of folks).

Of course, they’re wrong. People will want this, they just don’t know it yet. I’m hopeful that in the medium to long term, the next wave of Tango enabled devices do hit a wider audience. Asus has something coming soon, by all accounts, and Motorola might even do something in the Moto X space.

I’m still hopeful that Google will get the message and integrate this stuff into either Daydream or a standalone VR/AR headset device (and not gut the capabilities of Tango right now to achieve it).

Longer term, the platform will exist, in some shape or form. Whether that’s Tango, or something Apple-y, or

something from Intel / Nvidia /

Scanner

Last, but not least, I’ve made a couple of improvements to the scanner app itself - not all bug fixes! I finally got so annoyed by the Collada support in various apps that I wrote an OBJ exporter. As per usual, I was expecting this to take a long time, and it took less than a day. Why do all the things that look hard end up easy, and vice versa? I really have no idea. Anyway, the output zip file from Scanner can now be directly uploaded straight to Sketchfab, so we’re halfway to a decent workflow for sharing captures.

Next week …

Mostly messing with Phab to get things working, I guess. Camera registration will have to wait another week!